GPUDrive: Multi-Agent Reinforcement Learning Driving Simulator

Ran multi-agent driving simulations using GPUDrive, training IPPO agents and comparing CPU vs GPU performance on the Waymo Mini dataset.

GPUDrive Project Details

GPUDrive is a high-performance, data-driven simulator designed to support large-scale multi-agent autonomous driving research by combining real-world driving data with massively parallel simulation. The framework is capable of running over one million simulation steps per second while maintaining a lightweight memory footprint, enabling thousands of environments and hundreds of agents to be simulated simultaneously. In the original paper, agents are trained using Independent Proximal Policy Optimization (IPPO), where each agent learns its own policy independently without communication, allowing efficient scaling across large datasets such as the Waymo Open Motion Dataset. GPUDrive addresses common simulation bottlenecks through techniques such as polyline decimation, bounding volume hierarchies for collision filtering, and dynamic memory allocation.

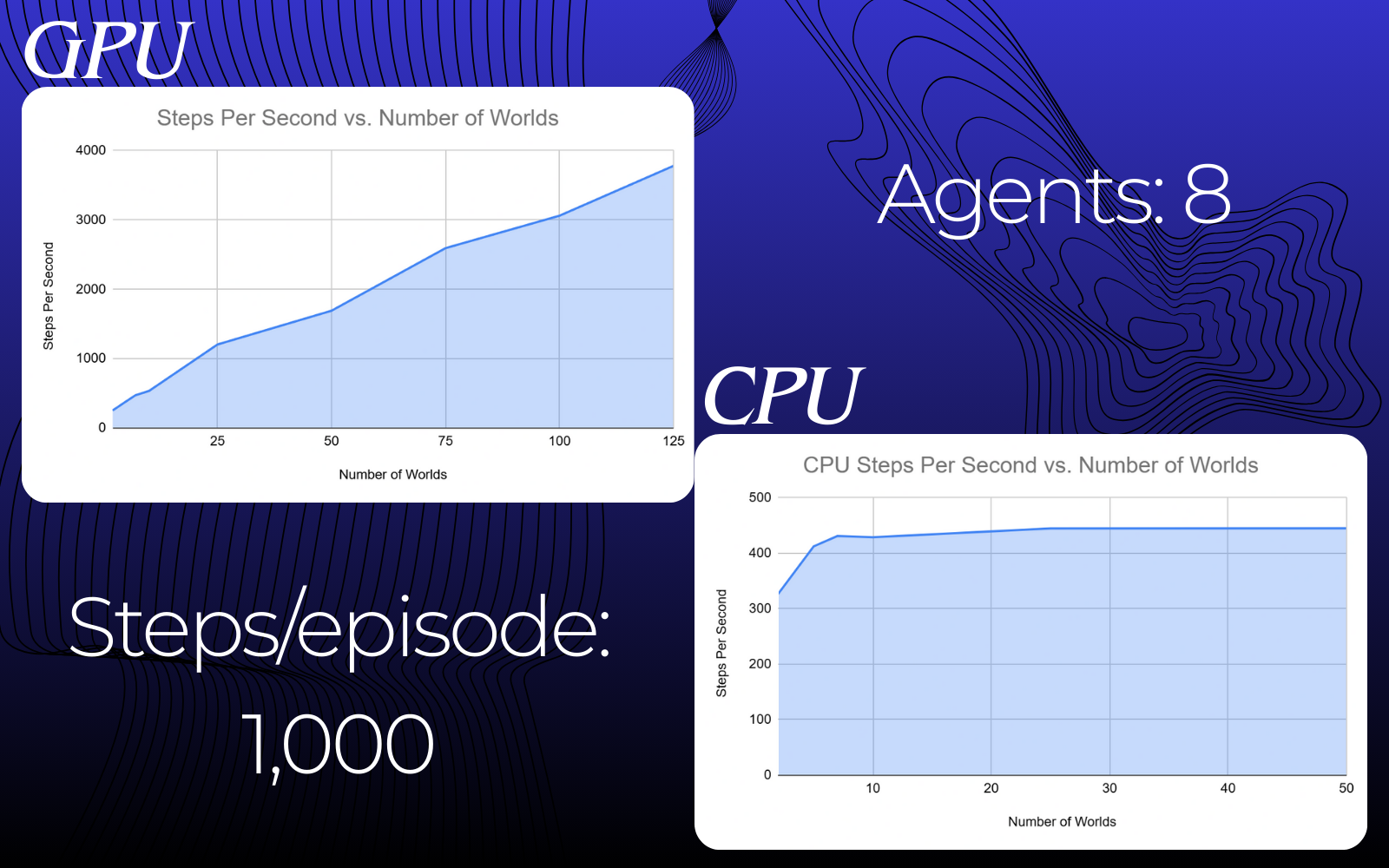

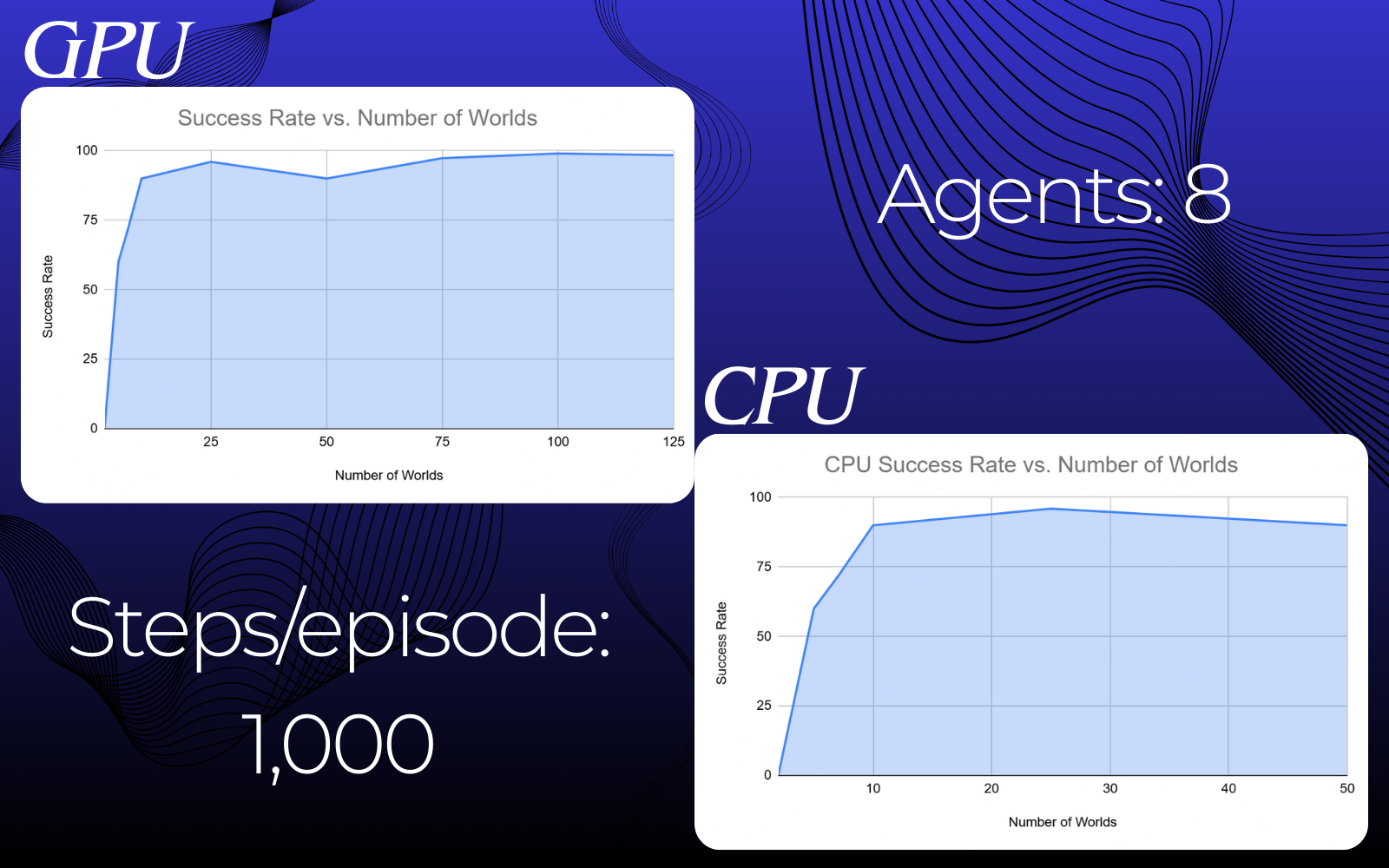

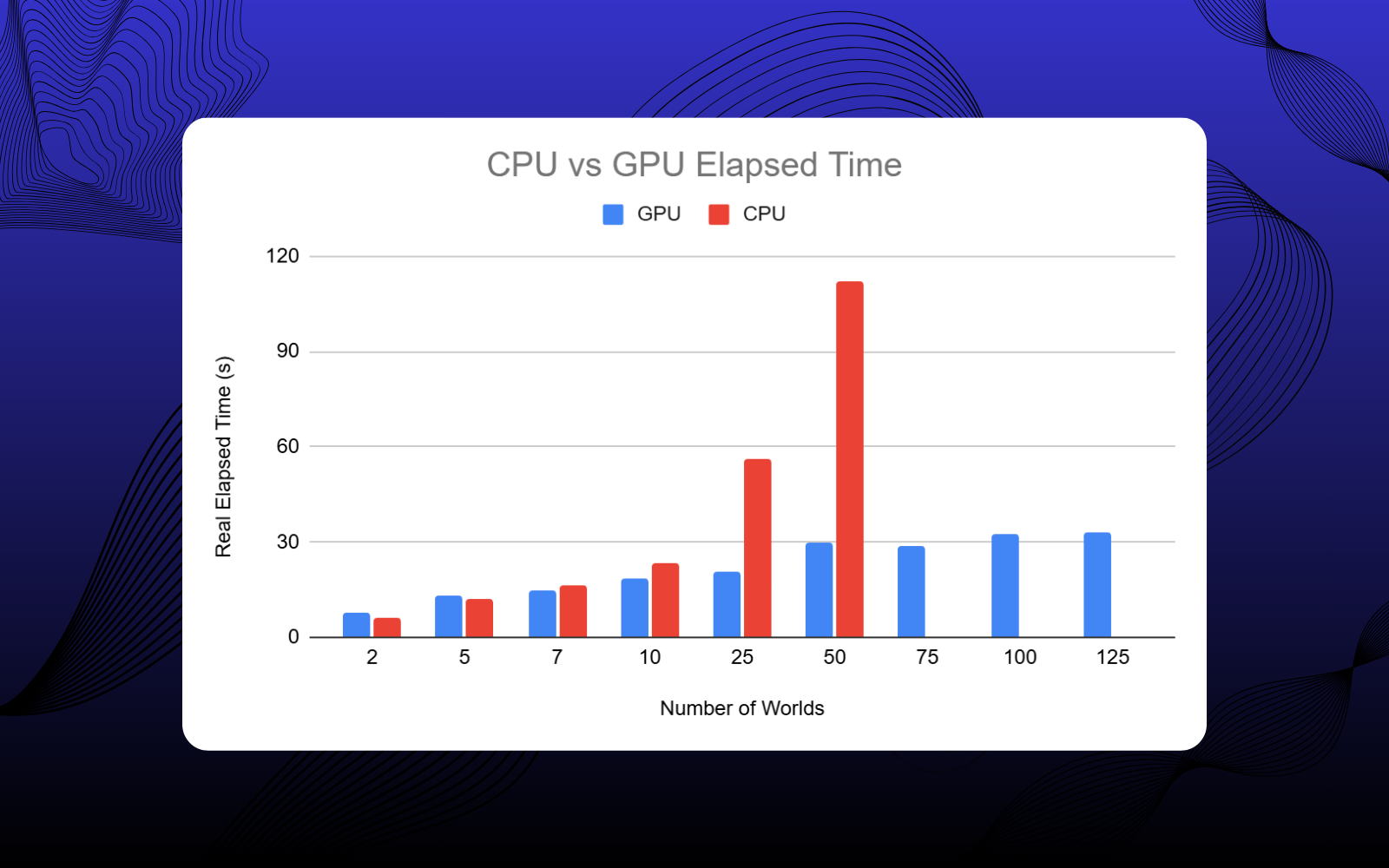

In this project, we reproduced and experimented with GPUDrive by configuring the simulator using the Waymo Mini Dataset and implementing a custom Python training pipeline. We trained agents using IPPO and evaluated performance under varying levels of parallelism by changing the number of simulated worlds, ranging from small-scale setups to 100 parallel environments. Our experiments compared CPU and GPU execution to measure resource trade-offs, focusing on steps per second, training time, and success rates. Throughout the setup process, we encountered challenges related to limited documentation, unresolved GitHub issues, and CUDA compatibility, particularly on Windows-based systems, ultimately achieving stable execution on Ubuntu.

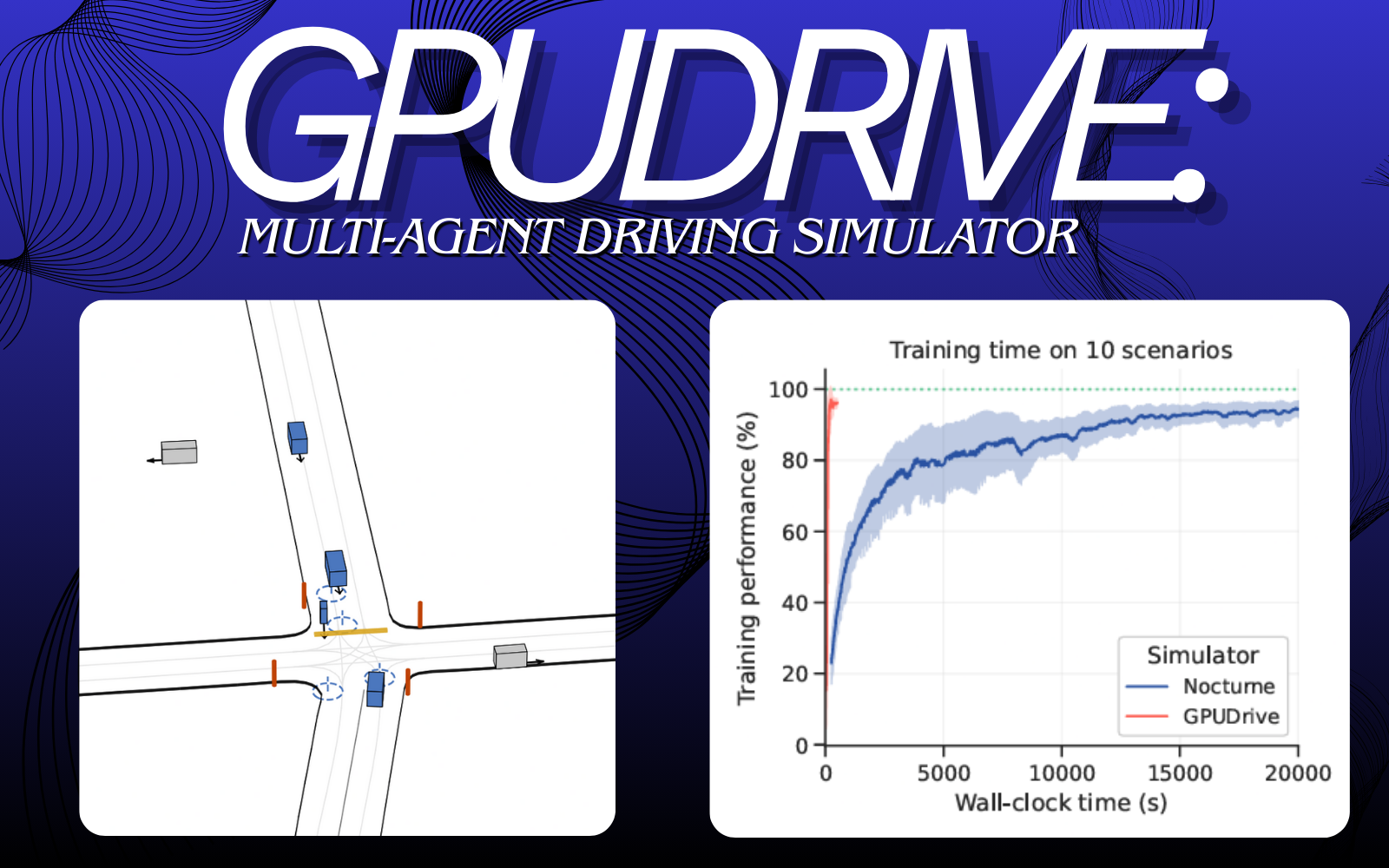

GPUDrive achieves a 200 - 300x training speedup, solving 10 scenarios in less than 3 minutes compared to approximately 10 hours in Nocturne.

from the GPUDrive paper

GPUDrive: Data-Driven, Multi-Agent Driving Simulation at 1 Million FPS

The results showed that GPU execution significantly outperformed CPU execution, with steps per second increasing rapidly as parallel environments scaled. While success rates were only slightly higher on GPU, the dramatic reduction in training time made GPU-based simulation far more efficient overall.

Project information

- Category Research

- Technologies Python, C++, CUDA Toolkit, CMake, Ubuntu/Linux, Waymo Open Motion Dataset, Gymnasium (Torch & JAX environments)

- Skills GPU computing and benchmarking, multi‑agent reinforcement learning simulation, environment configuration and debugging, performance analysis, experimental evaluation

- Project date November 2025 - December 2025

- Final Presentation Final Presentation Download

- Project URL GitHub Link